From experimentation to operations: what weekend-built AI data platforms teach us about production readiness

From experimentation to operations: what weekend-built AI data platforms teach us about production readiness

A weekend demo can win the room. It cannot earn production trust.

A weekend is enough to prove an AI data idea can work. It is nowhere near enough to prove the platform can survive identity abuse, runaway cost, brittle orchestration, and the first executive who asks who owns it on Monday morning.

The uncomfortable truth is that modern AI and cloud tooling, including Azure, now makes prototypes look far more complete than they really are. That is great for innovation. It is dangerous for governance.

My opinion is simple: enterprises do not need to slow down experimentation. They need to get much stricter about what counts as ready.

The weekend build is the new strategy deck

If you are a data or platform leader, you have probably sat through a demo that looked suspiciously close to a product decision. A model answers natural-language questions. A few enterprise datasets are connected. An API or function wraps the workflow. There is a polished UI. Someone says, “We built this over the weekend.”

That sentence lands differently now because the tooling really has improved. Connecting data, orchestration, and model interaction is easier than it was even a year ago.

Let’s give prototypes proper credit. Fast builds are excellent at:

- validating whether users actually want the experience

- exposing where data access is still painfully manual

- revealing where natural-language interaction collapses workflow complexity

- surfacing the integration points that matter most

That is a feature, not a flaw.

The mistake is treating a prototype as an architecture endorsement. A prototype is a discovery instrument. It tells you where value might exist. It does not tell you whether the system is supportable, governable, or economically sane.

The contrast I use with leadership teams is simple:

- A demo proves possibility.

- Production requires repeatability, accountability, and bounded risk.

That gap is where most AI platform failures happen.

The hidden cliff: operating model debt starts with identity

The fastest way to turn an AI data prototype into an enterprise incident is to let identity sprawl masquerade as agility.

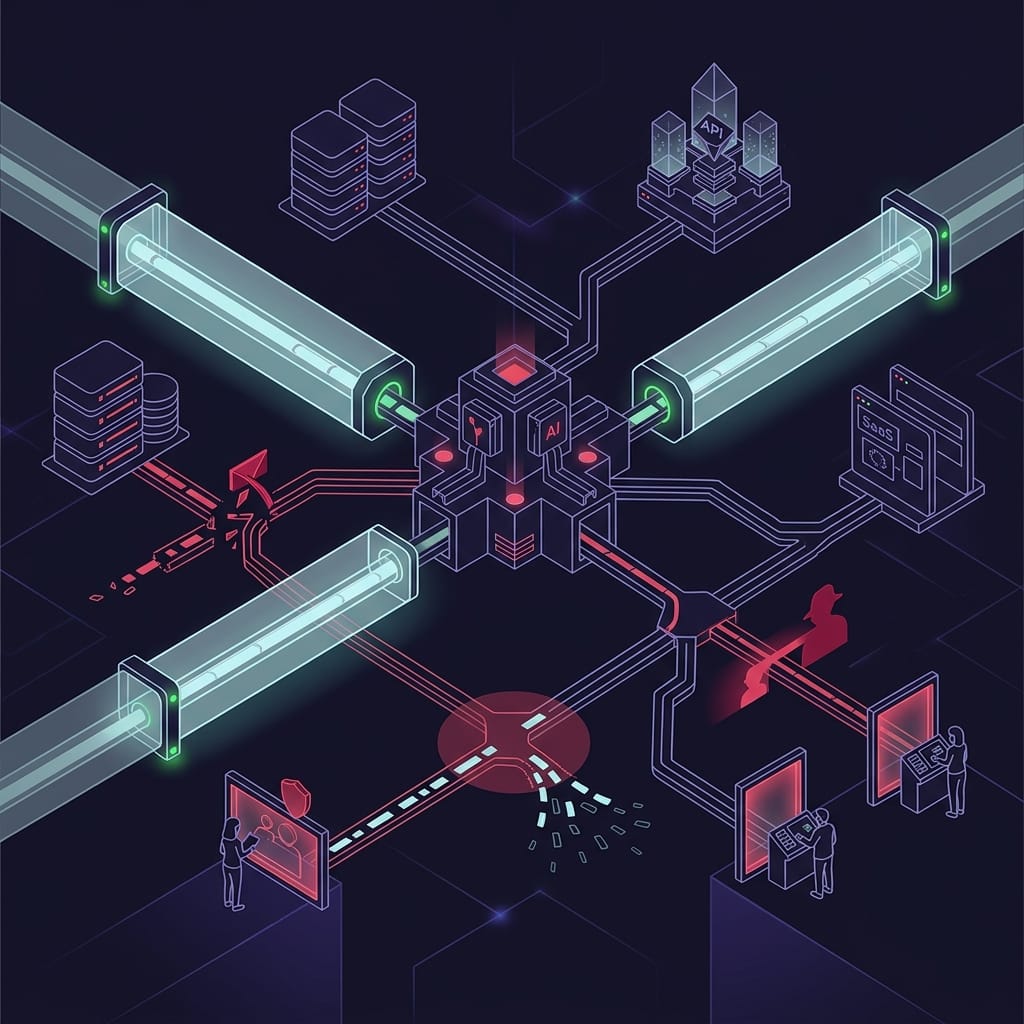

Modern infrastructure security guidance is clear on the core point: defense in depth is not a single perimeter. It spans identity, software supply chains, control planes, networks, and data. That is exactly the right mental model for AI data platforms. They are layered systems, not demos, and they fail as layered systems too.

The concrete failure modes are not hypothetical:

- OAuth consent abuse can grant an app access it never should have had

- token leakage in local tooling or logs can turn a debug convenience into a credential incident

- adversary-in-the-middle phishing can capture credentials or sessions if controls are weak

- overprivileged service principals can give agents broad access to sensitive datasets and downstream actions

Agentic systems make this worse because they multiply trust relationships. The model has one set of permissions. The tool layer has another. APIs have their own auth boundaries. Databases have data-plane roles. Humans may approve actions in yet another control path.

If you do not define those boundaries explicitly, your “smart assistant” becomes a permission amplifier.

A simple way to explain the difference between a weekend build and a promotable workload:

- hardcoded secrets become managed identity or another secretless pattern

- shared roles become scoped RBAC and data-plane roles

- print statements become structured logs, traces, and metrics

- enthusiasm-based promotion becomes policy-gated promotion

That is not bureaucracy. That is the minimum architecture required to limit blast radius.

Now look at the anti-pattern in code form:

# Anti-pattern: a prototype couples secrets, broad access, and ad-hoc logging

import json

import time

SQL_CONNECTION = "Server=tcp:demo.database;User Id=admin;Password=SuperSecret!"

AGENT_API_KEY = "sk-dev-token"

TOOL_SCOPE = "all-datasets"

def run_prototype(question: str) -> str:

print(f"[debug] connecting with {SQL_CONNECTION}")

print(f"[debug] calling agent with key={AGENT_API_KEY} scope={TOOL_SCOPE}")

time.sleep(0.1)

result = {"question": question, "answer": "prototype output", "source": "shared-prod-db"}

print("[debug] result=", result)

return json.dumps(result)

if __name__ == "__main__":

print(run_prototype("Which customers are at risk?"))

What matters here is not just the hardcoded secret. It is the combination of copied credentials, broad scope, and ad-hoc logging. That combination is exactly how teams create a system that “works” and still fails every serious production review.

A better pattern is boring by design:

# Production pattern: managed identity, scoped access, and structured telemetry

import json

import logging

from dataclasses import dataclass

logging.basicConfig(level=logging.INFO, format="%(message)s")

logger = logging.getLogger("ai-platform")

@dataclass

class RequestContext:

principal_id: str

db_role: str

tool_scope: str

trace_id: str

def run_production(question: str, ctx: RequestContext) -> str:

logger.info(json.dumps({"event": "auth", "mode": "managed_identity", "principal": ctx.principal_id, "trace_id": ctx.trace_id}))

logger.info(json.dumps({"event": "authorize", "db_role": ctx.db_role, "tool_scope": ctx.tool_scope, "trace_id": ctx.trace_id}))

result = {"question": question, "answer": "production output", "source": "curated-view", "trace_id": ctx.trace_id}

logger.info(json.dumps({"event": "completed", "status": "ok", "trace_id": ctx.trace_id}))

return json.dumps(result)

if __name__ == "__main__":

context = RequestContext("mi://analytics-app", "db_datareader_curated", "customer-risk-read", "trace-001")

print(run_production("Which customers are at risk?", context))

What changes here is what matters in production: managed identity instead of embedded credentials, scoped roles instead of blanket access, and structured telemetry instead of console noise. The examples illustrate control intent and telemetry patterns, not full enforcement logic. In a real system, authorization must be enforced in code and platform policy, not inferred from logs. And safe logging matters: log only the minimum operational context needed, never raw secrets, tokens, or unnecessary user/PII payloads.

My baseline production bar is non-negotiable:

- managed identities where possible

- least-privilege scopes

- secretless patterns

- conditional access

- consent governance

- token handling standards

- explicit separation between build-time and run-time credentials

If a prototype cannot evolve into that model, it should not be promoted.

Trust is deeper than application code

A lot of AI platform conversations still happen too high in the stack. People debate prompts, tools, and orchestration while ignoring the trust primitives underneath.

That is a mistake.

Enterprise trust runs deeper into infrastructure: key custody, identity boundaries, data contracts, and observability. For AI and data leaders, that matters because sensitive prompts, embeddings, retrieval indexes, and downstream action paths all expand the blast radius of weak controls.

If your prototype depends on copied secrets, shared admin accounts, or opaque key custody, it has already failed the production test.

The same is true of data access. This is where elegant agent demos become brittle systems:

- pipelines prepare data one way

- the agent expects it another way

- semantic assumptions live in prompts or tool code

- the whole thing works only under ideal conditions

“Chatting with data” is not a data platform strategy unless the underlying data contracts are stable and governed.

That is why database modernization matters here. AI maturity depends on the data estate being modernized enough to support governed access patterns, not just model connectivity.

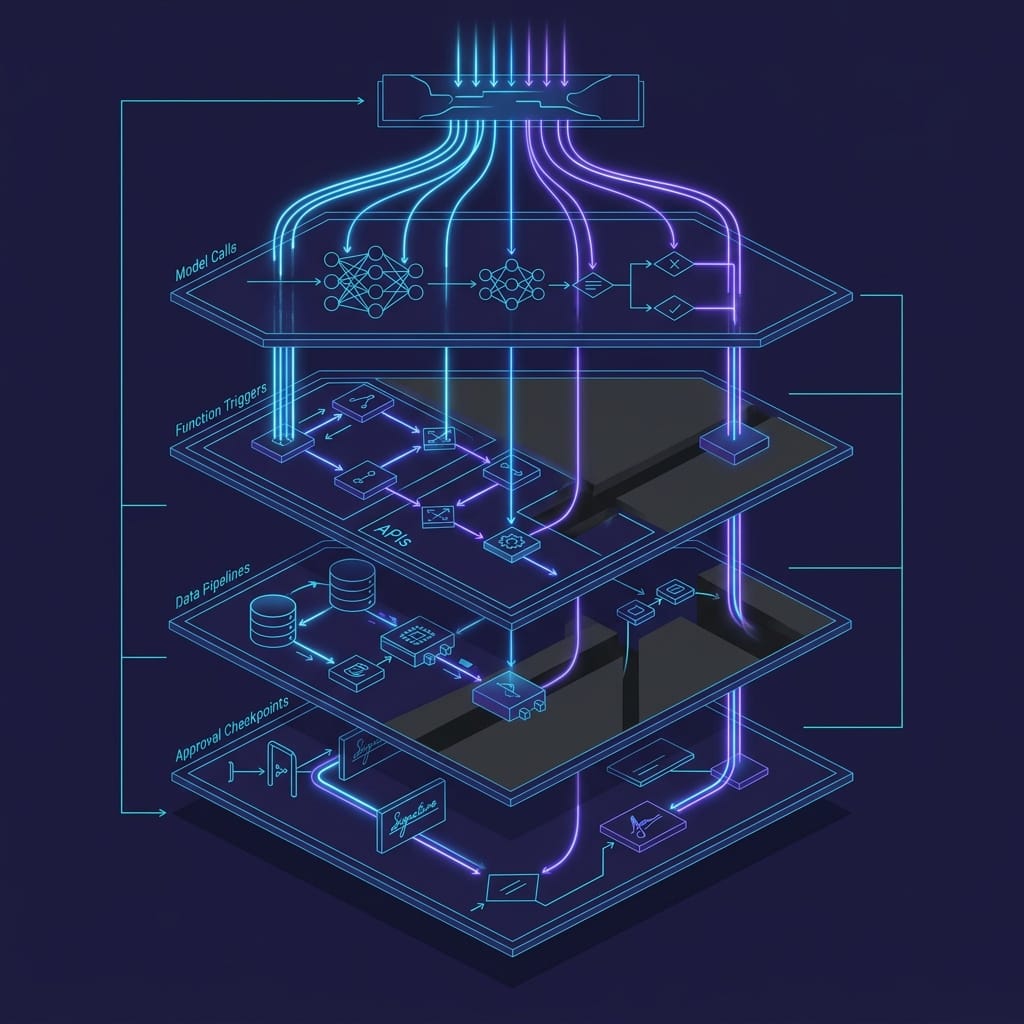

And once those workflows go live, observability becomes the dividing line between experimentation and operations.

If leaders cannot trace what the agent saw, decided, called, and returned, they do not have a production system.

For AI/data workloads, observability has to cover more than uptime:

- prompt traces

- tool invocation logs

- data lineage

- model and version attribution

- latency decomposition

- failure correlation

- user and session context

Serverless and event-driven patterns can accelerate prototypes, but they also fragment telemetry if you do not design for trace continuity from the start.

The promotion pattern I recommend is straightforward:

- validate identity before promotion

- require diagnostics for logs, metrics, and traces

- block higher-environment deployment when those controls are missing

- return remediation steps instead of relying on manual exceptions

Here is a concrete example of a simple governance gate for identity:

# Governance check: require managed identity before promotion

param(

[hashtable]$Workload = @{

Name = "ai-risk-service"

IdentityType = "SystemAssigned"

Environment = "preprod"

}

)

if ($Workload.IdentityType -notin @("SystemAssigned", "UserAssigned")) {

throw "Promotion blocked: workload '$($Workload.Name)' must use managed identity."

}

[pscustomobject]@{

Workload = $Workload.Name

Check = "ManagedIdentity"

Result = "Passed"

Target = $Workload.Environment

} | Format-List

And here is the equivalent gate for diagnostics:

# Governance check: require diagnostic settings for logs, metrics, and traces

param(

[hashtable]$Diagnostics = @{

ResourceName = "ai-risk-service"

LogsEnabled = $true

MetricsEnabled = $true

TraceExport = "OpenTelemetry"

}

)

$missing = @()

if (-not $Diagnostics.LogsEnabled) { $missing += "logs" }

if (-not $Diagnostics.MetricsEnabled) { $missing += "metrics" }

if ([string]::IsNullOrWhiteSpace($Diagnostics.TraceExport)) { $missing += "trace export" }

if ($missing.Count -gt 0) {

throw "Promotion blocked: missing diagnostic controls: $($missing -join ', ')."

}

"Diagnostics check passed for $($Diagnostics.ResourceName)"

Treat examples like these as policy intent. The exact implementation can vary, but the principle should not: no managed identity, no promotion; no logs, metrics, and trace export, no promotion.

Cost discipline is architecture discipline

AI excitement has not repealed financial accountability.

The old cost principles still matter in the age of AI, and weekend builds systematically hide true cost because they underrepresent concurrency, retries, retention, storage growth, operational support, and the ugly tail of failure handling.

A prototype that costs $40 to run in a demo can become a five-figure monthly surprise once you add:

- real user volume

- larger context windows

- repeated retrieval operations

- storage accumulation

- background refresh jobs

- observability retention

- support overhead

Production readiness should therefore include unit economics:

- cost per workflow

- cost per agent interaction

- cost per dataset refresh

- cost per business outcome

If you cannot express those numbers, you do not have architecture discipline yet.

This is also where automated cost hygiene matters. Storage lifecycle optimization, tiering, and retention controls are not side topics once AI/data exhaust starts accumulating. Uncontrolled AI/data cost is usually not a tooling problem. It is a symptom of unclear ownership.

Governance now has to cover APIs, models, tools, and agents together

One of the most important platform shifts happening right now is that the control plane is expanding.

Enterprises can no longer govern REST endpoints one way and agent actions another. Both are callable operational interfaces. Both have policy, security, and lifecycle implications.

That means your governance model has to cover, across every callable surface:

- authentication

- authorization

- throttling

- versioning

- approval paths

- audit trails

- deprecation policies

Without that, teams create shadow control planes: agents invoking business actions outside normal API governance.

A lightweight way to make this visible is a readiness scorecard:

# Readiness scorecard: translate prototype lessons into production criteria

from dataclasses import dataclass, asdict

import json

@dataclass

class ReadinessScore:

identity: bool

least_privilege: bool

telemetry: bool

policy_gate: bool

def summary(self) -> str:

passed = sum(asdict(self).values())

return json.dumps({"passed_controls": passed, "total_controls": 4, "ready": passed == 4})

if __name__ == "__main__":

prototype = ReadinessScore(False, False, False, False)

production = ReadinessScore(True, True, True, True)

print("prototype:", prototype.summary())

print("production:", production.summary())

This is simplified on purpose. Leaders need a small set of controls that are easy to understand and hard to waive. If identity, least privilege, telemetry, and policy gates are missing, the workload is not ready.

Production readiness includes deployment boundaries

Some AI/data workloads cannot assume a default public-cloud operating model.

For regulated or sovereignty-sensitive workloads, production readiness includes where the platform runs, who controls it, and what dependencies it can tolerate.

That changes architecture choices upstream.

If the target operating model is sovereign, hybrid, or disconnected, then identity patterns, data movement, support procedures, and vendor dependencies all need to be designed differently from the start. Sovereignty is not just a compliance line item. It is a design constraint.

This is why I push leaders to answer the deployment-boundary question early, not after the prototype succeeds.

The practical production bar leaders should use

Here is the standard I would apply before scaling any AI data prototype:

- identity model defined

- managed identity or equivalent secretless pattern in place

- data access contracts documented

- least-privilege roles enforced

- observability implemented across workflows, tools, and approvals

- support ownership assigned

- cost guardrails defined

- governance mapped across APIs, models, tools, and agents

- deployment boundary chosen up front

If the team cannot explain how the system fails safely, rotates credentials with fallback planning, limits blast radius, and gets supported at 2 a.m., it is still an experiment.

That is not a criticism. It is a classification.

The real lesson from weekend-built AI data platforms is not that enterprises should move slower. It is that they should stop confusing prototype velocity with production evidence. Better tooling makes experimentation easier than ever. That makes executive discipline more important, not less.

The competitive advantage is no longer building an AI data app over a weekend. It is operationalizing the right one without inheriting invisible risk.

For practitioners: which control most often blocks promotion in your environment: identity, telemetry, data contracts, cost guardrails, or ownership? And what is the one minimum production gate you refuse to waive for AI workloads?

#EnterpriseAI #DataArchitecture #PlatformEngineering

Sources & References

- Azure IaaS: Defense in depth built on secure-by-design principles

- Enforcing trust and transparency: Open-sourcing the Azure Integrated HSM

- Microsoft Discovery: Advancing agentic R&D at scale

- Introducing Azure Accelerate for Databases: Modernize your data for AI with experts and investments

- Cloud Cost Optimization: Principles that still matter

- Optimize object storage costs automatically with smart tier, now generally available

- Microsoft Sovereign Private Cloud scales to thousands of nodes with Azure Local

Try it yourself

Run this tutorial as a Jupyter notebook: Download runbook.ipynb (26 cells, 23 KB).