Why AI-era operating models require better data product ownership and lineage

Why AI-era operating models require better data product ownership and lineage

Data ownership is the first thing AI breaks in production.

Most AI operating model debates obsess over models, copilots, and agents. The harder truth is that enterprise AI usually breaks first at the seams of data ownership: when nobody can say who owns a data product, what changed upstream, or whether an agent should have touched that data at all.

Here’s the contrarian view: better models are no longer the main constraint for enterprise AI. The real differentiator is whether your operating model makes data products governable, traceable, and trustworthy across AI-connected workflows.

That is an opinionated claim, but it is also a practical one.

As AI systems handle more sensitive data and more autonomous workflows, trust has to be engineered into the stack, not added later. Microsoft has made that point directly in its work on verifiable trust infrastructure, and its push to govern not just APIs but also models, tools, and agents reinforces the same message: AI expands the governance surface area rather than replacing it.

My thesis is simple: agents amplify bad metadata, weak stewardship, unclear domain accountability, and poor identity or consent controls at machine speed. If your organization still treats data ownership as fuzzy, lineage as optional documentation, and access policy as a one-time setup task, your AI operating model is already behind.

The contrarian case: AI failures are increasingly operating model failures

The conventional executive instinct is to frame AI transformation as a model-selection problem. Which model? Which copilot? Which agent framework?

That instinct made sense when frontier capability was scarce. It makes less sense now.

As enterprise AI patterns move into production workflows, organizational readiness becomes the bottleneck. A CDO I worked with had 9 AI assistants querying three different “customer 360” tables before anyone could answer which one was the governed version and which one still contained revoked marketing consent flags.

That is not a model problem. That is an operating model failure.

Humans can sometimes work around ambiguity. Agents cannot. They operationalize whatever metadata, policies, and permissions you give them.

That is why AI raises the business cost of bad metadata and weak stewardship:

- A stale business definition in a dashboard is annoying. Reused by an agent across thousands of interactions, it becomes a systematic error source.

- A missing quality signal in a report is a nuisance. In an AI workflow, it means the system cannot distinguish trusted from degraded inputs before generating outputs.

- An undocumented upstream transformation is survivable in a manual process. In an agentic process, it becomes a hidden dependency that can corrupt decisions repeatedly until someone notices.

This is no longer back-office hygiene. It is revenue risk, compliance exposure, customer trust, and wasted AI spend.

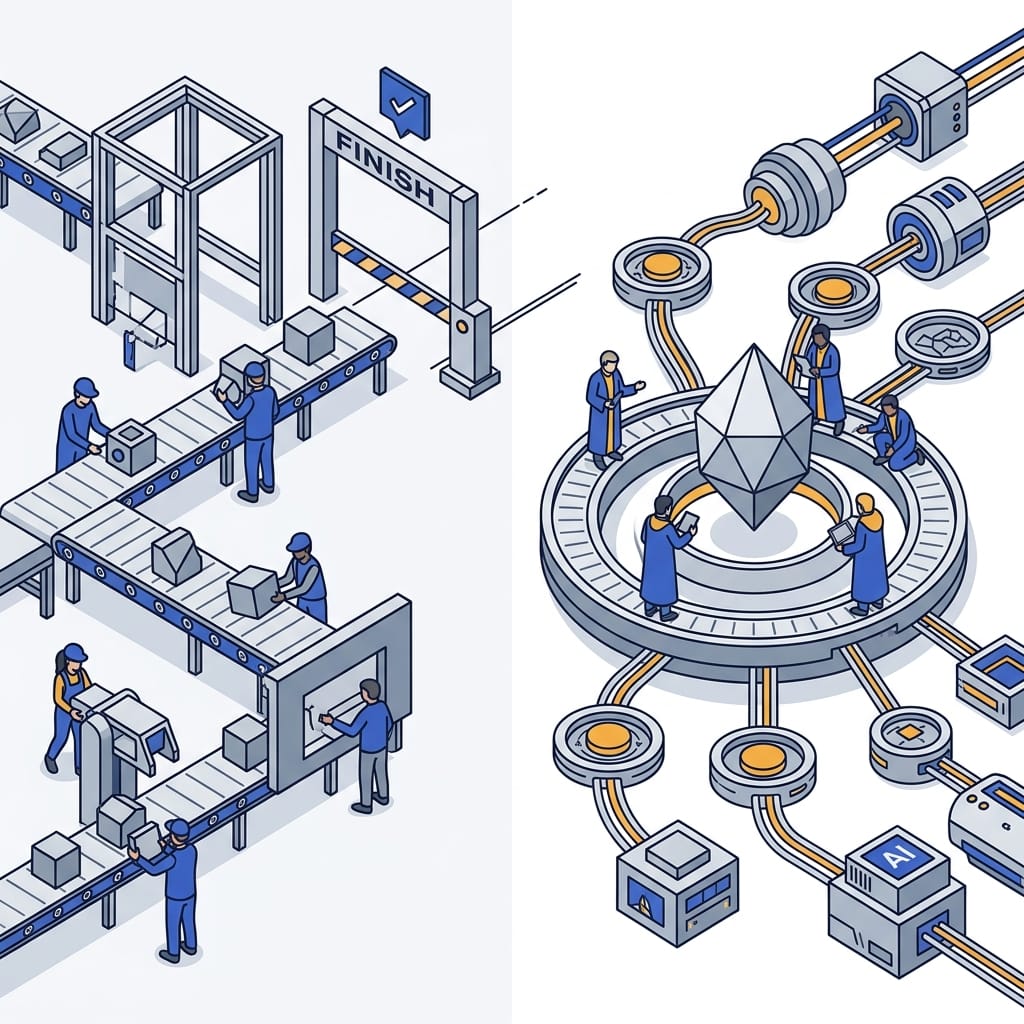

Project teams cannot govern what products must continuously serve

This is where many organizations fool themselves. They say they have “data products,” but they still fund and govern them like temporary integration projects.

A project team optimizes for launch:

- deliver the pipeline

- meet the milestone

- move to the next scope item

A real data product operating model optimizes for continuous service:

- preserve semantic clarity

- maintain quality thresholds

- manage access and policy changes

- understand downstream dependencies

- retain consumer trust over time

That difference matters much more in AI than in traditional analytics.

A true data product owner should own at least six things:

- The product’s business purpose

- Its quality thresholds and incident response expectations

- Its policy and sensitivity alignment

- Its approved access patterns

- Its lifecycle and deprecation path

- Its consumer trust contract

If no one owns those things, then “ownership” is just metadata decoration.

For non-engineering leaders, this is the key idea: ownership only matters if it triggers action when something goes wrong.

# Ownership as an operating model contract, not just metadata decoration

class DataProductContract:

def __init__(self, name: str, owner: str, sla_hours: int):

self.name = name

self.owner = owner

self.sla_hours = sla_hours

def on_quality_incident(self) -> str:

return f"Notify {self.owner}; restore {self.name} within {self.sla_hours}h"

contract = DataProductContract("customer360", "growth-data@company.com", 4)

print(contract.on_quality_incident())

What matters here is not the code. It is the operating model behind it: the owner is tied to an explicit response expectation.

Lineage is not a compliance artifact; it is an operational control plane

Lineage is still treated in too many companies as passive documentation for auditors and architecture reviews. That view is obsolete.

In AI-connected workflows, lineage is an operational control plane.

You need it to answer production questions that actually matter:

- Which agent used which source?

- What transformation introduced the error?

- Which policy boundary was crossed?

- What downstream outputs need to be revoked, corrected, or retrained?

- What is the blast radius of this upstream change?

Without lineage, incident response turns into archaeology.

With lineage, you can make AI systems observable enough to govern. That is why lineage matters for rollback, change management, and executive confidence.

The same logic applies to metadata and stewardship more broadly. Before an AI workflow consumes a data product, three things should be explicit: a named owner, verified lineage, and policy approval for AI use.

For non-engineering leaders, this block shows the minimum gate an AI workflow should pass before it touches enterprise data.

# Metadata gate before an AI workflow uses a data product

from dataclasses import dataclass

@dataclass

class DataProduct:

name: str

owner: str

lineage_status: str

policy_tags: set[str]

def can_use_for_ai(product: DataProduct) -> bool:

if not product.owner:

raise PermissionError(f"{product.name}: missing owner")

if product.lineage_status != "verified":

raise PermissionError(f"{product.name}: lineage not verified")

if "ai-approved" not in product.policy_tags:

raise PermissionError(f"{product.name}: missing ai-approved tag")

return True

customer360 = DataProduct("customer360", "growth-data@company.com", "verified", {"pii-reviewed", "ai-approved"})

print(can_use_for_ai(customer360))

That is not production-ready governance code. It is a simple illustration of a fail-fast principle many AI stacks still lack.

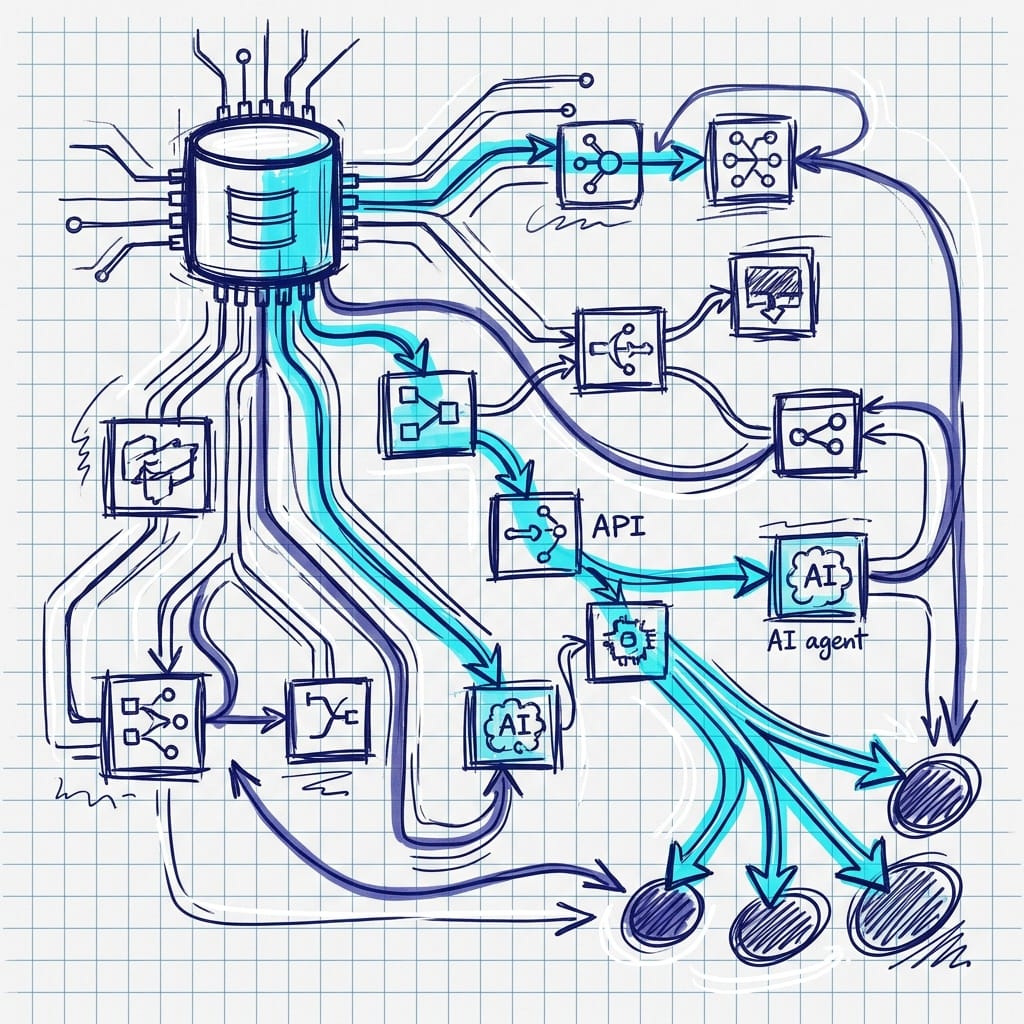

Here is the operating pattern in one view:

flowchart TD

A[AI use case requests data product] --> B{Ownership metadata present?}

B -- No --> X[Block usage and raise governance alert]

B -- Yes --> C{Lineage verified to approved sources?}

C -- No --> Y[Quarantine dataset for review]

C -- Yes --> D{Policy tags allow AI workload?}

D -- No --> Z[Deny access and log exception]

D -- Yes --> E[Allow feature generation / model inference]

E --> F[Record decision with owner, lineage, and policy snapshot]

That is how lineage stops being documentation and starts becoming control.

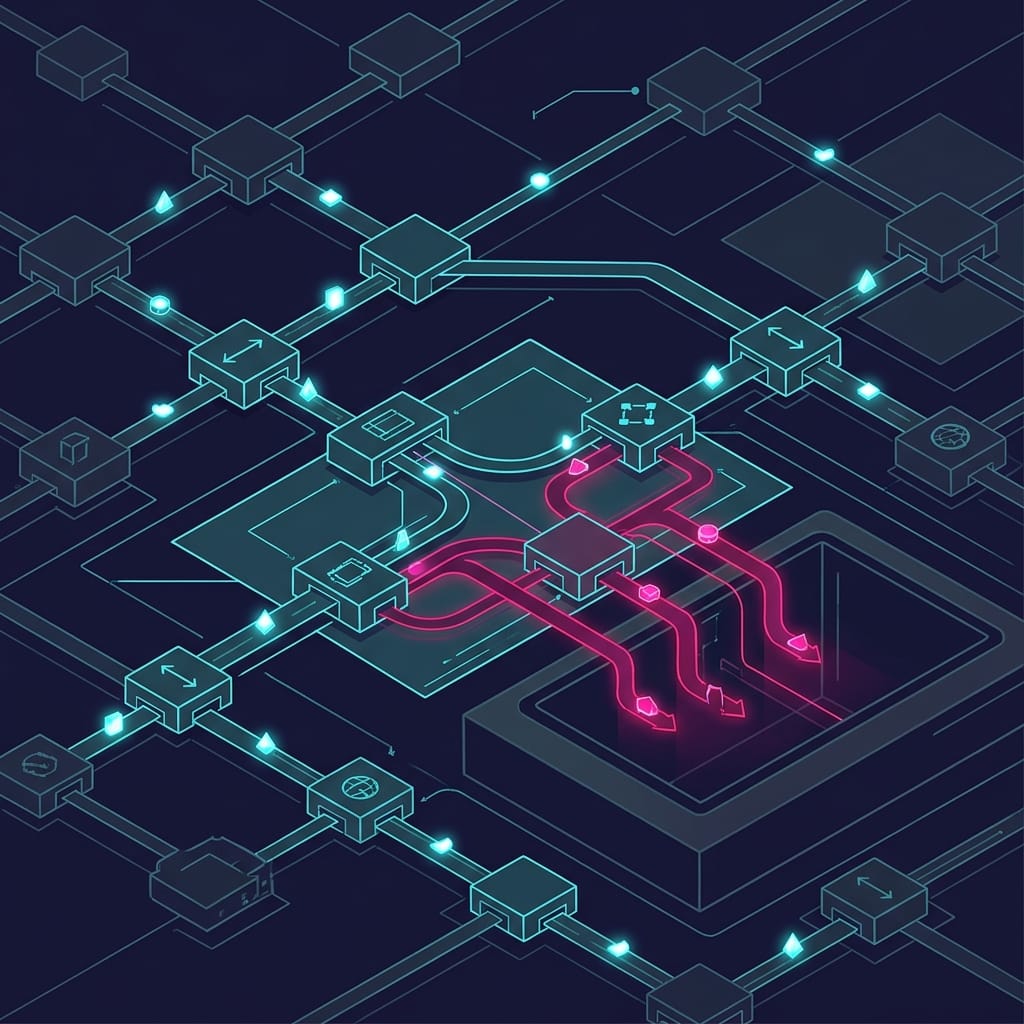

The hidden risk surface: identity, consent, and policy drift

The governance conversation cannot stop at schemas and quality rules. AI-connected workflows expand the control surface dramatically.

Every new agent, tool invocation, API call, delegated permission, and service identity creates another path to enterprise data. That means more tokens, more consent paths, more opportunities for overbroad access, and more room for policy drift.

This is why API governance matters more in the AI era, not less. Microsoft’s positioning of API management for models, tools, and agents is useful evidence here because it shows the perimeter has widened: governance now has to cover the full interaction layer around AI systems, not just the underlying data stores.

The operating-model point is simple: poor domain ownership weakens identity and consent controls because nobody is clearly accountable for whether access should exist, not just whether it technically can.

That distinction is everything.

Frontier firms will win on org design, not just model access

As models and agentic workflows become easier to adopt, the firms that clarify domain ownership and lineage now will scale faster because they can delegate safely.

They will know:

- which data products are approved for AI use

- which ones are restricted

- who signs off on changes

- how to trace downstream impact when something breaks

The laggards will keep repeating the same cycle:

- launch an AI pilot

- discover unclear ownership

- trigger review delays

- find undocumented transformations

- remediate permissions

- lose trust

- restart

That is not a technology maturity problem. It is an org design problem.

If your data products are not legible, your AI operating model is not scalable.

What leaders should change now

If you are responsible for enterprise AI, do these five things now.

1) Assign named business-accountable owners for every AI-critical data product

Not platform owners. Not generic stewards. Named business-accountable owners.

If a data product feeds an agent, recommendation engine, retrieval workflow, or customer-facing AI output, someone must own the definition, policy posture, and incident response path.

2) Define a minimum viable metadata standard for AI consumption

At minimum, every AI-consumable data product should have:

- business definition

- quality expectations

- sensitivity classification

- consent basis

- access policy

- lineage status

- downstream dependencies

- named owner

3) Treat lineage coverage as an operational readiness requirement

If agents can trigger actions or produce customer-facing outputs, lineage should be mandatory, not aspirational.

4) Unify governance across data, security, APIs, and domain teams

Fragmented committees do not govern AI well. The control points now span data platforms, policy engines, service identities, APIs, and domain-specific approval paths. Your operating model has to reflect that reality.

5) Measure the right operational metrics

Do not settle for vanity metrics like number of pilots launched.

Track:

- percentage of AI-critical data products with named owners

- percentage with verified lineage

- time to impact analysis after upstream change

- number of policy exceptions tied to AI workflows

- time to revoke or quarantine affected downstream outputs

Those are operating-model metrics. They are also AI scale metrics.

The unglamorous foundations will decide who scales AI responsibly

The winners of the AI era will not simply have the best models. They will have the clearest accountability for the data those models and agents rely on.

Ownership and lineage are not bureaucratic overhead. They are the mechanisms that make AI scalable, auditable, and economically rational. They are how you prevent agents from amplifying ambiguity. They are how you turn trust from a slogan into an operating capability.

So yes, keep evaluating models. Keep experimenting with copilots and agents. But stop pretending that model quality can compensate for unclear ownership, weak metadata, and missing lineage.

It cannot.

Which of these is your biggest blocker today: named ownership, verified lineage, or policy approval for AI use?

#EnterpriseAI #DataArchitecture #AIGovernance

Sources & References

- Enforcing trust and transparency: Open-sourcing the Azure Integrated HSM

- Microsoft named a Leader in the IDC MarketScape: Worldwide API Management 2026 Vendor Assessment

- Microsoft Discovery: Advancing agentic R&D at scale

- Introducing Azure Accelerate for Databases: Modernize your data for AI with experts and investments

Try it yourself

Run this tutorial as a Jupyter notebook: Download runbook.ipynb (21 cells, 19 KB).