Token efficiency as the new FinOps metric for GitHub and Copilot agent workflows

Token efficiency as the new FinOps metric for GitHub and Copilot agent workflows

Seat counts are the wrong Copilot metric. Token efficiency is the one that will decide whether agentic software delivery scales economically or turns into a very expensive illusion.

Most Copilot governance still looks like SaaS procurement: count seats, approve models, hope productivity follows. But as agentic workflows scale, the real cost lever is no longer license allocation. It is token efficiency per useful engineering outcome.

Here is the distinction I think matters most:

- Microsoft documents FinOps as an operational framework for maximizing business value through timely, data-driven decision-making and accountability.

- Microsoft also documents token counting mechanics and is explicit that GitHub Copilot does not currently provide a built-in dashboard for directly measuring team adoption, efficiency, usage, and ROI.

- My opinion is that token efficiency should become the primary governance metric for Copilot and agent workflows, because access alone does not explain whether AI spend is producing durable engineering value.

That is not a semantic distinction. It is the governance shift.

The uncomfortable truth: seat management is not AI FinOps

A Copilot seat tells you who can access AI. It tells you almost nothing about whether your organization is using AI efficiently.

That mattered less when Copilot was mostly autocomplete. It matters much more now that GitHub Copilot is increasingly part of multi-step workflows, including agent-based task execution and CI/CD-connected modernization patterns. Once AI moves from ad hoc assistance into repeatable delivery workflows, the economics become operational.

In Q1, I reviewed a 220-developer platform organization where one modernization workflow generated roughly three times more AI spend per merged change than another, even though both teams had identical Copilot access and the same approval policy. Treat that as a directional field observation, not statistically rigorous proof: “AI spend” included model-driven workflow consumption, and “merged change” meant a PR merged to the main delivery branch.

That is the point: licenses did not explain the variance. Workflow design did.

This is why token efficiency is the AI-era equivalent of cloud rightsizing. Rightsizing never meant “use less cloud.” It meant “stop paying for waste and align spend to value.” Token efficiency is the same discipline. It is not anti-innovation. It is pro-leverage.

Why now: agentic development changes the unit economics

Simple code completion had fuzzy unit economics. Agents do not.

Microsoft’s guidance on AI agents for FinOps hubs describes agents as systems that combine LLMs with external tools and data sources. Microsoft’s GitHub Copilot modernization guidance shows agentic workflows being integrated into CI/CD pipelines. Microsoft training for GitHub Copilot coding agents frames agents as a way to assign tasks and streamline development. In other words, AI is no longer just helping a developer type faster. It is participating in delivery systems.

Once that happens, you need a unit-cost model tied to outcomes.

The most practical unit is the token. Microsoft’s token counting documentation explains why: LLMs operate on tokens rather than raw characters, and token counting estimates input and output usage before requests are made. That makes tokens the clearest controllable measure because they sit at the intersection of four things leaders can actually influence:

- model choice

- prompt scope

- context size

- iteration behavior

If you do not measure tokens against useful outcomes, you will confuse activity with value.

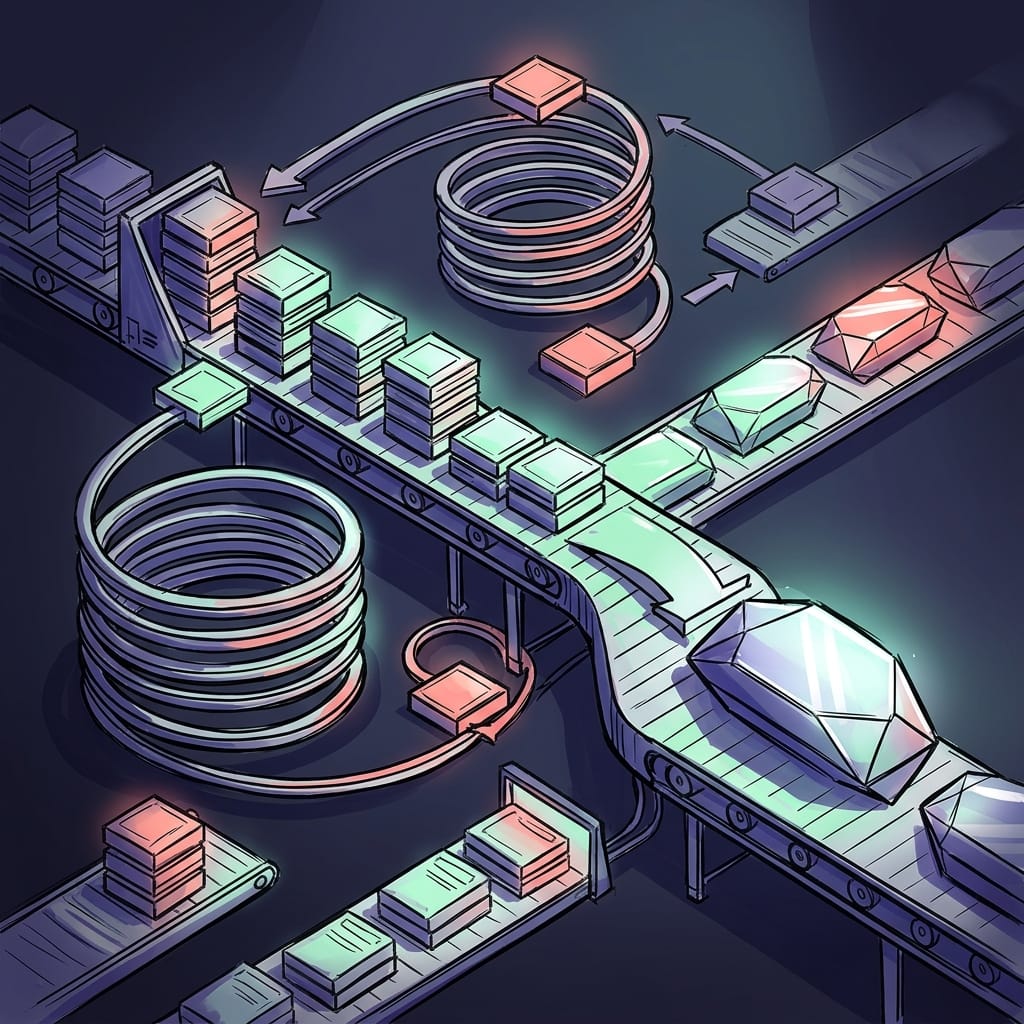

A simple architecture for doing this is to export workflow telemetry, normalize it, compute KPIs, and expose showback by team or repository.

flowchart TD

A[GitHub Actions / Copilot Agents] --> B[Workflow Telemetry Export]

B --> C[Normalize Events]

C --> D[Token Efficiency KPIs]

D --> E[Showback by Team / Repo]

D --> F[Optimization Loop]

F --> G[Prompt hygiene]

F --> H[Retry reduction]

F --> I[Quality gates]

E --> J[FinOps dashboard]

What to notice: this is not a procurement dashboard. It is an optimization loop.

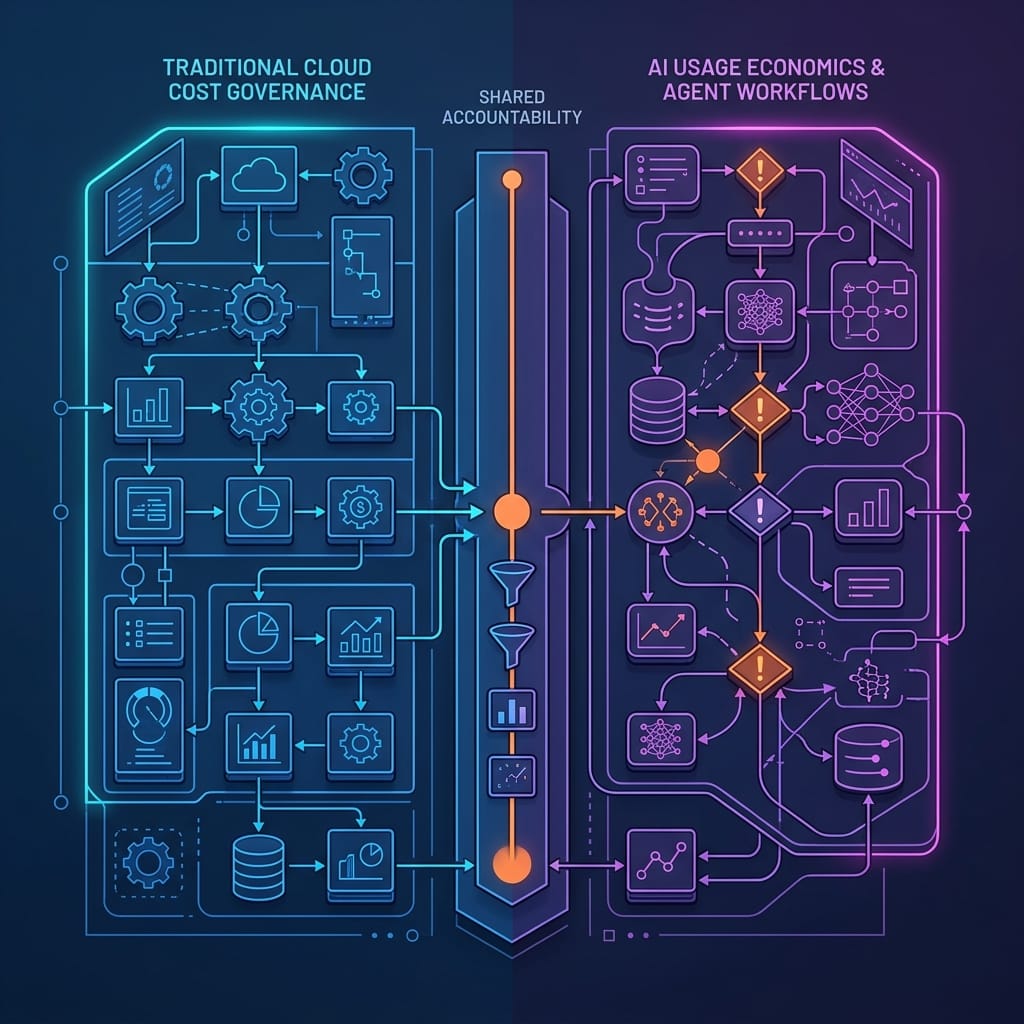

From cloud FinOps to AI FinOps: what carries over and what breaks

A lot of cloud FinOps thinking carries over cleanly.

You still need:

- accountability

- shared visibility

- showback or chargeback logic

- optimization loops

- business-value framing rather than raw spend panic

That aligns with Microsoft’s broader FinOps best practices around optimization, efficiency, and insight generation from operational data, and with the FinOps toolkit’s role in helping organizations adopt and automate FinOps capabilities.

But some familiar instincts break in AI workflows.

Cloud waste is often obvious: idle compute, orphaned disks, overprovisioned databases. AI waste is slipperier. More tokens do not automatically mean more value, but fewer tokens do not automatically mean better governance either. A larger context window may be justified for a complex refactor. A second pass may prevent a bad merge. The hidden tax is rework.

So the right mental model is not tokens per request. It is tokens per useful outcome.

And because AI waste is probabilistic, the scorecard must combine consumption, acceptance, and rework.

That means you should care about:

- tokens per completed task

- tokens per accepted PR

- tokens per merged change

- retry loops per successful outcome

- review burden created by AI-generated output

This is also why adoption metrics are vanity metrics when they stand alone. “Eighty percent of developers used Copilot this month” is not a financial or engineering outcome. It is a participation number.

The metrics that actually matter in Copilot and agent workflows

If you want a practical starting point, create an internal scorecard with five layers:

- Adoption

Who is using AI, how often, and in which workflows?

- Token efficiency

How many tokens are consumed per completed task, accepted PR, or merged change?

- Quality-adjusted output

Are low-cost outputs actually getting accepted, merged, or retained?

- Rework

How much review churn, rollback, or correction follows AI-generated work?

- Policy compliance

Are workflows staying inside approved tool, data, and review boundaries?

Here is a lightweight example in Python that computes token efficiency KPIs per repository from exported workflow telemetry.

# Load exported workflow telemetry and compute token efficiency KPIs per repository.

import csv

from collections import defaultdict

rows = [

{"repo": "api", "task_completed": "1", "pr_accepted": "1", "retries": "0", "tokens": "3200", "cost": "0.64", "quality": "0.92"},

{"repo": "api", "task_completed": "1", "pr_accepted": "0", "retries": "1", "tokens": "4100", "cost": "0.82", "quality": "0.70"},

{"repo": "web", "task_completed": "1", "pr_accepted": "1", "retries": "0", "tokens": "2100", "cost": "0.42", "quality": "0.95"},

]

kpis = defaultdict(lambda: {"tokens": 0, "tasks": 0, "accepted_prs": 0, "retries": 0, "cost": 0.0, "quality_cost": 0.0})

for r in rows:

repo = r["repo"]

tokens = int(r["tokens"])

cost = float(r["cost"])

quality = float(r["quality"])

kpis[repo]["tokens"] += tokens

kpis[repo]["tasks"] += int(r["task_completed"])

kpis[repo]["accepted_prs"] += int(r["pr_accepted"])

kpis[repo]["retries"] += int(r["retries"])

kpis[repo]["cost"] += cost

kpis[repo]["quality_cost"] += cost / max(quality, 0.01)

for repo, v in kpis.items():

print({

"repo": repo,

"tokens_per_completed_task": round(v["tokens"] / max(v["tasks"], 1), 2),

"tokens_per_accepted_pr": round(v["tokens"] / max(v["accepted_prs"], 1), 2),

"retry_rate": round(v["retries"] / max(v["tasks"], 1), 2),

"quality_adjusted_cost": round(v["quality_cost"], 2),

})

Important caveat: these formulas are illustrative heuristics for internal experimentation, not standardized benchmarks or production scoring models. The point is to show the shape of a scorecard, not prescribe universal thresholds.

If your telemetry is messy, normalize it first. This example turns raw workflow events into a compact schema that downstream KPI calculations can actually use.

# Normalize raw telemetry into a compact schema for downstream KPI calculations.

raw_events = [

{"repository": "api", "team_slug": "platform", "input_tokens": 1200, "output_tokens": 2000, "status": "completed", "pr_state": "merged", "attempt": 1},

{"repository": "api", "team_slug": "platform", "input_tokens": 1500, "output_tokens": 2600, "status": "completed", "pr_state": "closed", "attempt": 2},

]

normalized = []

for e in raw_events:

normalized.append({

"repo": e["repository"],

"team": e["team_slug"],

"tokens": e["input_tokens"] + e["output_tokens"],

"task_completed": 1 if e["status"] == "completed" else 0,

"pr_accepted": 1 if e["pr_state"] == "merged" else 0,

"retries": max(e["attempt"] - 1, 0),

})

for item in normalized:

print(item)

What to do next: define a minimum event schema before you argue about dashboards. If you cannot consistently identify repo, team, tokens, task outcome, PR outcome, and retry count, your governance conversation will stay stuck at anecdotes.

Where token waste really comes from

The biggest waste sources are usually not individual developer failures. They are design failures in the workflow:

- oversized prompts that ask for too much at once

- excessive repository context pulled into the session

- vague task framing that forces the agent to guess

- unconstrained autonomy across code, tools, or data

- repeated retries after low-quality first-pass output

- missing review checkpoints that allow bad output to travel downstream

This is why the most expensive workflow is not necessarily the one with the highest token count. The worst workflow is the one that burns tokens and still creates rework.

That rework is where many organizations undercount cost: extra reviewer time, more CI runs, more test failures, more rollback risk, and eventually lower trust in the workflows that actually could have created value.

You can detect some of this with simple heuristics. For example, high-token sessions with multiple retries and no accepted PR usually point to prompt or context problems, not model-access problems.

# Detect inefficient agent sessions by flagging high-token retries and low acceptance outcomes.

sessions = [

{"session_id": "s1", "tokens": 1800, "retries": 0, "accepted_pr": 1},

{"session_id": "s2", "tokens": 5200, "retries": 3, "accepted_pr": 0},

{"session_id": "s3", "tokens": 4700, "retries": 2, "accepted_pr": 1},

]

def classify(session):

if session["tokens"] > 5000 and session["retries"] >= 2:

return "prompt_or_context_issue"

if session["accepted_pr"] == 0 and session["tokens"] > 3000:

return "low_quality_spend"

return "healthy"

for s in sessions:

print({"session_id": s["session_id"], "classification": classify(s)})

Again, this kind of classification is directional. It is useful for surfacing optimization candidates, not for pretending that a toy rule set is a mature governance model.

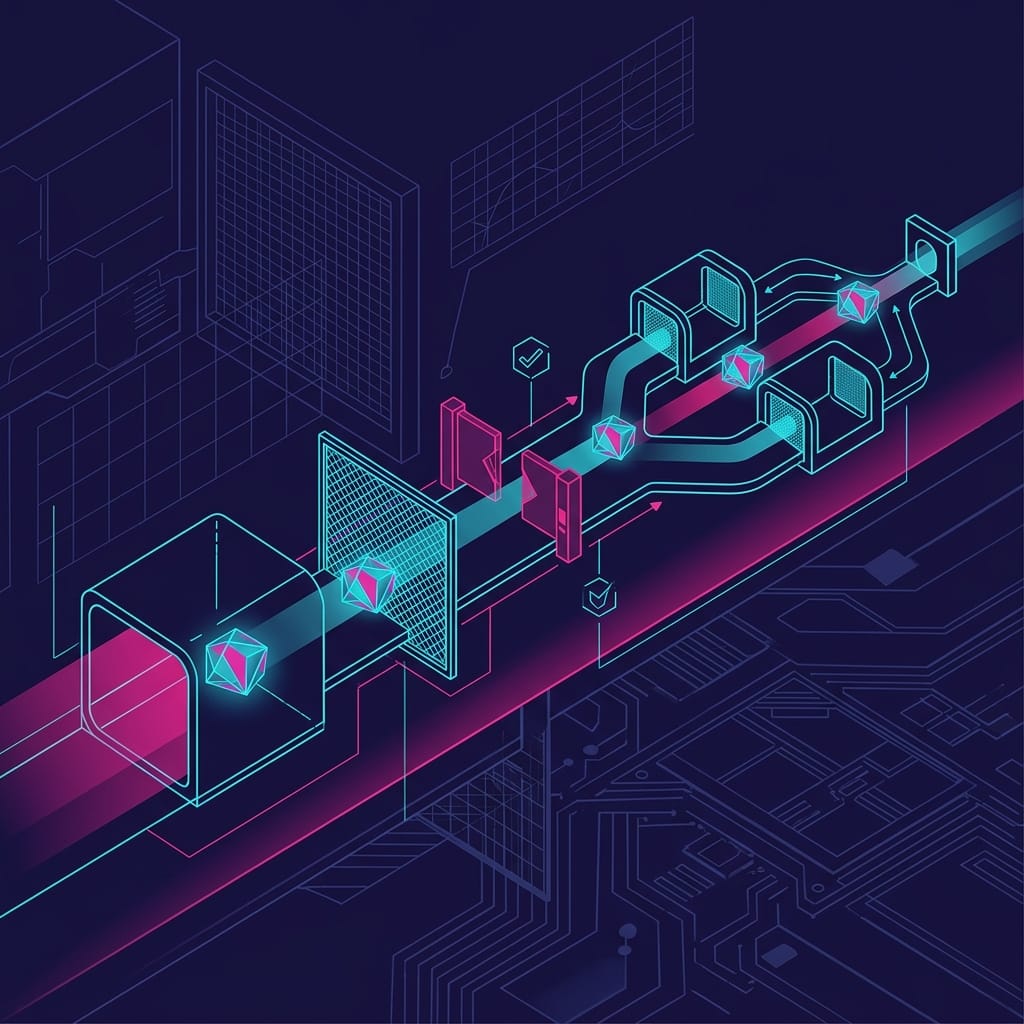

The new control plane: usage-shaping, not just access control

This is the opinionated part: the next generation of AI governance belongs closer to platform engineering than procurement.

Procurement can approve a vendor. Security can define guardrails. But neither function, by itself, can shape the economics of agent workflows. The cost controls that matter now are operational:

- prompt templates for recurring tasks

- bounded task scopes

- retrieval limits

- approval checkpoints before expensive actions

- mandatory human review for risky changes

- standard decomposition patterns for modernization, test generation, and performance work

Microsoft’s training on improving code performance with GitHub Copilot Agent is a good example of why this matters. Performance analysis and optimization are high-value tasks, but they can also become high-consumption loops if the agent is allowed to iterate across broad codebases without clear boundaries.

Task decomposition is the underappreciated control here. Smaller, bounded tasks usually reduce retries and improve acceptance rates. They also make cost attribution cleaner. A vague prompt like “modernize this service” is governance poison. A bounded task like “upgrade dependency X, update failing tests, and open a PR with only compatibility changes” is governable.

If your publishing format does not reliably render sequence diagrams, the operating checklist is simple:

- instrument the path from request to workflow execution

- capture tokens, attempts, and outcome signals

- connect PR acceptance or merge status back to the originating task

- aggregate by repo and team

- use the results to improve prompts, scope, and review gates

A pragmatic operating model for platform and FinOps teams

The workable model is joint ownership.

Platform engineering should define standard workflow patterns. Security should define data and tool boundaries. FinOps should define unit economics, showback logic, and optimization review cycles.

That is much closer to Microsoft’s FinOps toolkit philosophy than a pure procurement model. AI usage governance should evolve alongside those practices, not outside them.

A practical executive review should include:

- adoption trend by workflow type

- tokens per useful outcome

- quality-adjusted output

- rework rate

- anomaly rate

- policy exceptions

And it should be trend-based, not absolute. Different languages, repositories, and task types have different token profiles. The question is not “Which team used the fewest tokens?” The question is “Is token growth buying throughput and quality, or just buying more AI-generated churn?”

If you want a simple scoring model to compare teams or repositories, use a weighted index that blends token efficiency, retry behavior, and quality-adjusted cost.

# Score token efficiency with a weighted index to compare teams or repositories.

def efficiency_score(tokens_per_task, retry_rate, quality_adjusted_cost):

token_component = max(0.0, 100 - (tokens_per_task / 100))

retry_component = max(0.0, 100 - (retry_rate * 100))

cost_component = max(0.0, 100 - (quality_adjusted_cost * 20))

return round((0.5 * token_component) + (0.2 * retry_component) + (0.3 * cost_component), 2)

samples = [

{"name": "team-api", "tokens_per_task": 3650, "retry_rate": 0.18, "quality_adjusted_cost": 1.05},

{"name": "team-web", "tokens_per_task": 2200, "retry_rate": 0.05, "quality_adjusted_cost": 0.44},

]

for s in samples:

print({"name": s["name"], "efficiency_score": efficiency_score(**{k: s[k] for k in ("tokens_per_task", "retry_rate", "quality_adjusted_cost")})})

Same caveat here: this is a directional management aid for internal comparison, not a standardized benchmark and definitely not a blunt performance weapon.

Conclusion: the best AI cost strategy is better workflow design

Enterprises that only manage Copilot licenses are optimizing the least important variable.

The controllable economics of GitHub Copilot and agent workflows now live in prompt design, task decomposition, workflow boundaries, context discipline, and review loops. That is where waste is created. That is where value is protected. And that is why token efficiency should be treated as a first-class FinOps metric for AI-assisted software delivery.

Seat counts still matter for entitlement and security. They do not explain whether your AI workflows are economically sound.

Access is procurement. Efficiency is operations. Value is governance.

The winners in agentic software delivery will not be the organizations with the broadest access or the most permissive model catalog. They will be the ones that treat workflow design as a financial control system.

If you had to operationalize one metric tomorrow—tokens per merged change, retry rate, or review burden—which would you trust most and why?

#FinOps #GitHubCopilot #PlatformEngineering

Sources & References

- What is FinOps? - Cloud Computing

- Copilot Metrics / KPI - Microsoft Q&A

- Configure AI agents for FinOps hubs - Cloud Computing

- CI/CD Integration with Modernize CLI - GitHub Copilot Modernization Agent

- FinOps toolkit overview - Cloud Computing

- FinOps best practices for general resource management - Cloud Computing

- Token counting in AI - Business Central

- FinOps toolkit roadmap - Cloud Computing

- Improve Code Performance using GitHub Copilot Agent - Training

- Accelerate development with GitHub Copilot Cloud Agent - Training

Try it yourself

Run this tutorial as a Jupyter notebook: Download runbook.ipynb (33 cells, 26 KB).