Teaching Copilot team-specific workflows with Agent Skills in Visual Studio

Teaching Copilot team-specific workflows with Agent Skills in Visual Studio

Copilot should learn your team, not impersonate your staff.

That is the real opportunity when you teach GitHub Copilot team-specific workflows in Visual Studio using skill-like patterns.

Most teams do not need Copilot acting like an autonomous engineer. They need it to stop forgetting how their team actually works: where business logic belongs, which tests are mandatory, what a pull request must include, and which steps are never optional.

A CIO asked me recently, “Why does every developer have to reteach Copilot our PR process?” That is exactly the problem this approach can help solve.

The practical thesis is simple: if you encode team-specific workflows as scoped guidance, reusable prompts, validation scripts, and repository-aware context, you get more consistent assistance than wiki pages, copied prompt snippets, or unmanaged Copilot usage. You also keep the right boundary in place: human review stays mandatory.

One important product-accuracy note up front: GitHub Copilot and Visual Studio already support prompt-driven, context-aware assistance, and Microsoft documents agent-oriented patterns in the broader Copilot ecosystem. But the examples below are implementation patterns and illustrative skill shapes, not a published Visual Studio schema.

In Q1, I watched a 14-developer .NET team lose two review cycles on a payments API because Copilot kept generating controller-heavy logic that violated the team’s service-layer rule, even though that rule was documented in Confluence.

This tutorial shows how to fix that class of problem in a hands-on way.

Step 1: Identify one repeatable workflow to encode

Do not start with “teach Copilot our whole engineering process.” That is too broad, too fragile, and too hard to validate.

Start with one bounded workflow that already has stable inputs, stable outputs, and clear review criteria. Good candidates include:

- adding a new API endpoint

- preparing a pull request

- validating a solution before review

- updating a data access layer with standard tests

- scaffolding a service that follows repository conventions

Why this beats wiki pages and prompt docs

A wiki page is passive. A copied prompt template is inconsistent. A workflow encoded into repo guidance is useful because it can show up inside the development flow, with the right context at the right moment.

That matters because GitHub Copilot agent-oriented workflows already emphasize structured documentation and iterative work across a codebase for tasks like fixing errors, refactoring, and feature development. Microsoft’s training makes the point clearly: documentation files are part of how the assistant is instructed effectively (GitHub Copilot agent mode training).

Define success before you define the workflow

For your first workflow, pick one or two measurable outcomes:

- fewer repeated prompts from developers

- fewer PR comments about conventions

- faster first-pass implementation

- better consistency in test suggestions

- fewer missed checklist items before review

Step 2: Gather the team context Copilot actually needs

This is where most teams either overdo it or underdo it.

They either dump an entire wiki into the workflow, or they provide one vague sentence like “follow our architecture.” Neither works well.

Collect the minimum useful context

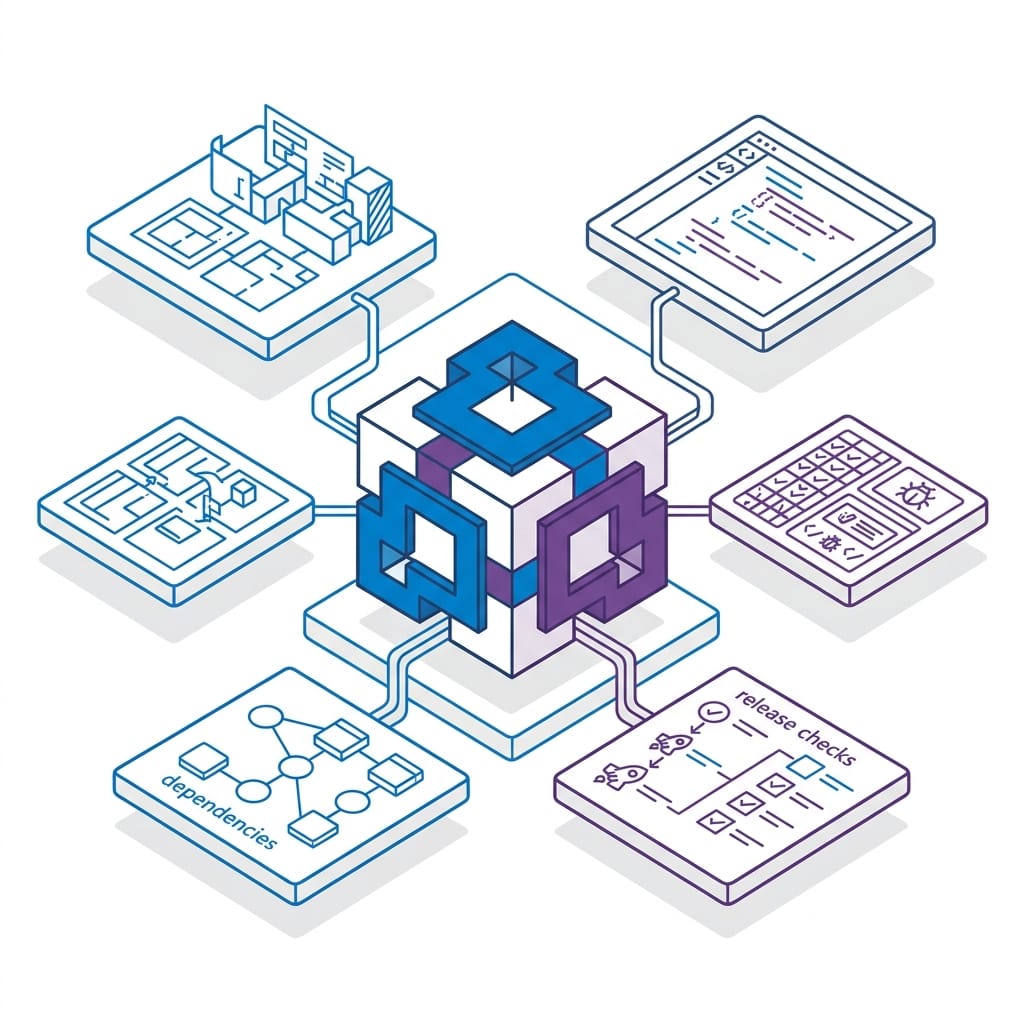

For a real Visual Studio team, the high-value categories are usually:

- architecture boundaries

- coding conventions

- dependency and layering rules

- test expectations

- PR and release checks

- documentation requirements

- security reminders tied to specific changes

The key is to separate durable guidance from task-specific instructions.

Durable guidance includes rules like:

- controllers stay thin

- services contain business logic

- use async for I/O-bound operations

- changed behavior requires unit tests

Task-specific instructions include things like:

- for this PR, update README if endpoints changed

- for this endpoint, ask for route, request model, and auth requirements before generating code

Turn tribal knowledge into concise, reusable guidance

A short team guidance file is often more useful than a long narrative document because it is easier to inject consistently into the workflow.

Here is an executable example of the kind of concise guidance you want to maintain.

<!-- TEAM_GUIDANCE.md: concise rules that an Agent Skill can inject as reusable context -->

# Team Guidance for C# Services

## Architecture rules

- Keep controllers thin; move business logic into services.

- Use `async` for I/O-bound operations.

- Register dependencies through `IServiceCollection`.

## Pull request checklist

- Run formatting before commit.

- Add or update unit tests for changed behavior.

- Document new endpoints in `README.md`.

What to observe: the guidance is brief, specific, and operational. It encodes rules the assistant can actually apply, rather than broad aspirations.

Normalize ambiguous guidance into structured context

If your team’s checklists are currently scattered across docs and PR templates, it helps to convert them into a more structured format.

Here is an executable example:

# normalize_guidance.py: convert a team checklist into structured JSON for skill context

import json

checklist = [

"Run formatting before commit",

"Add or update unit tests",

"Document new endpoints in README.md",

]

rules = [{"id": i + 1, "text": item, "required": True} for i, item in enumerate(checklist)]

payload = {

"skill": "dotnet-team-workflow",

"category": "pull-request",

"rules": rules,

}

print(json.dumps(payload, indent=2))

A practical rule for context collection

Prefer the smallest authoritative source that answers the question the workflow needs to answer.

Do not feed a 40-page engineering handbook into a task that only needs to know:

- where logic belongs

- what tests are required

- what commands must run before a PR

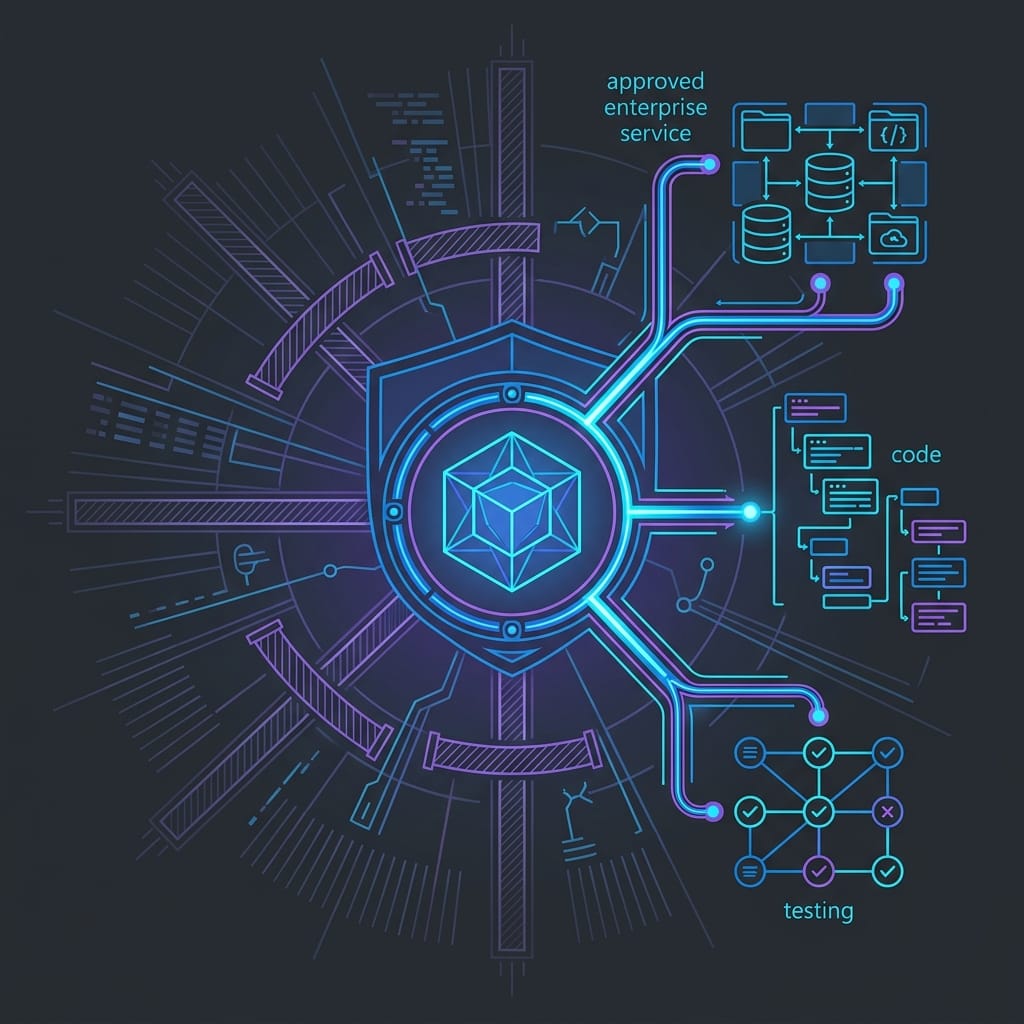

Step 3: Design the guardrails before you automate anything

Do not connect actions before you define boundaries.

For each workflow, write down:

- which repositories it can reference

- which solutions or projects it applies to

- which file patterns it should inspect

- which actions are read-only versus change-producing

A useful default is:

- code suggestions: developer reviews before accepting

- PR preparation: developer validates checklist and commands

- infrastructure changes: human approval always required

- external system actions: explicit approval and environment controls

- production-impacting decisions: never delegated

Also create a lightweight ownership record:

- owner

- version

- review cadence

- approved repositories

- approved actions

- rollback path

Treat these assets like internal platform artifacts, not clever prompt files.

Step 4: Define the workflow shape around a bounded task

Now you are ready to define the workflow itself.

Again, a product-accuracy note: Visual Studio and GitHub Copilot document prompt-based and agent-oriented assistance, but not a native “Agent Skill” file format in the exact form below. The examples below are implementation patterns and illustrative skill shapes, not a published Visual Studio schema.

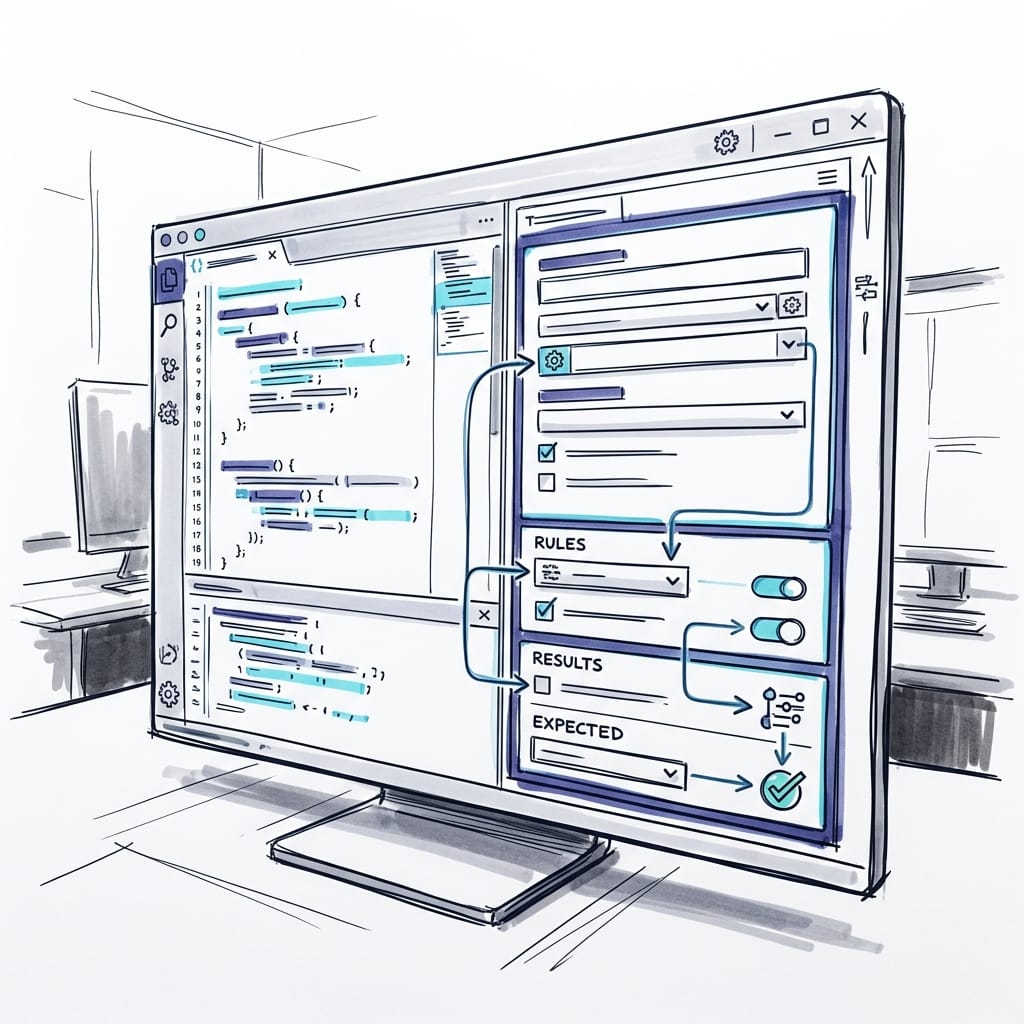

Your first workflow should state four things clearly:

- purpose

- scope

- expected inputs

- explicit non-goals

For example, a team workflow for a .NET repository might help with endpoint creation, PR preparation, and validation checks, while staying limited to source and test files in a known solution.

Here is pseudocode for an illustrative skill definition:

// agent-skill.json: define a team-specific skill Copilot can use in Visual Studio

{

"name": "dotnet-team-workflow",

"description": "Applies repository conventions for C# services, tests, and pull request readiness.",

"triggers": [

"create endpoint",

"prepare pull request",

"validate solution"

],

"inputs": {

"solution": "*.sln",

"changedFiles": ["src/**/*.cs", "tests/**/*.cs"]

},

"outputs": ["checklist", "commands", "code-suggestions"]

}

A strong definition should answer:

- When should Copilot use this?

- What repository or solution does it apply to?

- What files matter?

- What should the output look like?

- What should it refuse to do?

Examples of explicit non-goals:

- do not make architecture decisions without developer input

- do not modify deployment files

- do not infer security requirements if they are missing

- ask clarifying questions when route, auth, or data contract details are unclear

Step 5: Put the files in the repo and test the workflow in Visual Studio

This is where the tutorial becomes practical.

A simple repo layout looks like this:

/docs/TEAM_GUIDANCE.md/docs/prompt-template.md/eng/validate-workflow.ps1/eng/skill-context.json- solution file at the repo root or

/src

A concrete execution sequence:

- Add

TEAM_GUIDANCE.mdunder/docs. - Add

validate-workflow.ps1under/eng. - Add a small structured context file if your rules are easier to maintain as JSON.

- Open the solution in Visual Studio.

- Open GitHub Copilot Chat in Visual Studio.

- Reference the relevant files in your prompt or paste the reusable prompt pattern.

- Ask a bounded request such as “Prepare this PR for review using TEAM_GUIDANCE.md and the changed files.”

- Review the output, run the suggested commands, and refine the guidance in a PR if the result is off.

A practical first test set:

- “Prepare this PR for review.”

- “Add a new endpoint using our service pattern.”

- “Validate whether these changes meet team conventions.”

That gives you a repeatable loop: guidance file, prompt, output, review, PR update.

Step 6: Encode conventions the assistant can apply consistently

This is where the workflow becomes genuinely useful.

Focus on conventions with visible review impact:

- layering rules

- naming patterns

- logging requirements

- async usage

- dependency registration patterns

- test expectations

- PR checklist reminders

Here is an executable example of a normalized context file:

// skill-context.json: normalized guidance that can be bundled with the Agent Skill

{

"architecture": {

"controllerRule": "Controllers delegate to services",

"asyncRule": "Use async for I/O-bound operations",

"testingRule": "Changed behavior requires unit tests"

},

"workflow": {

"preCommit": ["dotnet format", "dotnet build", "dotnet test"],

"prNotes": ["Update README for endpoint changes"]

}

}

And here is an executable example implementation the assistant can mirror:

// OrderService.cs: a small service that follows team conventions Copilot can mirror

using System.Threading;

using System.Threading.Tasks;

public interface IOrderRepository

{

Task<int> SaveAsync(string customerId, CancellationToken cancellationToken);

}

public sealed class OrderService

{

private readonly IOrderRepository _repository;

public OrderService(IOrderRepository repository) => _repository = repository;

public Task<int> CreateAsync(string customerId, CancellationToken cancellationToken) =>

_repository.SaveAsync(customerId, cancellationToken);

}

One of the highest-value rules is a clarification rule: if required inputs are missing, ask before generating code.

For example:

- endpoint route missing

- auth model missing

- repository interface missing

- test framework expectation unclear

- target project not specified

Step 7: Connect the workflow to repeatable local actions

A workflow becomes more practical when it supports actions your team already repeats.

Start with safe, local repository checks rather than external systems. That aligns with Microsoft’s documented workflow direction in Copilot Studio: explicit flow design and testability, not magic (Copilot Studio workflows overview).

Here is an executable example of a standard validation script:

# validate-workflow.ps1: a standard repo check an Agent Skill could invoke or recommend

param(

[string]$Solution = "TeamApp.sln"

)

dotnet format $Solution --verify-no-changes

if ($LASTEXITCODE -ne 0) { throw "Formatting check failed." }

dotnet build $Solution --nologo

if ($LASTEXITCODE -ne 0) { throw "Build failed." }

dotnet test $Solution --no-build --nologo

if ($LASTEXITCODE -ne 0) { throw "Tests failed." }

Write-Host "Repository workflow checks passed."

A useful workflow should return structured outputs developers can act on quickly:

- a short checklist

- recommended commands

- targeted code suggestions

- a summary of missing information

- PR notes based on changed files

Here is an executable example of checklist generation. The path logic is intentionally simplified for illustration; production code should normalize paths and use repository-aware matching rather than relying only on string checks.

// PullRequestChecklistBuilder.cs: generate a team-aligned checklist from changed files

using System.Collections.Generic;

using System.Linq;

public static class PullRequestChecklistBuilder

{

public static IReadOnlyList<string> Build(IEnumerable<string> changedFiles)

{

var files = changedFiles.ToArray();

var items = new List<string> { "Run dotnet format", "Run solution tests" };

if (files.Any(f => f.Contains("Controllers")))

items.Add("Document endpoint changes in README.md");

if (files.Any(f => f.Contains("src/")))

items.Add("Confirm unit tests cover changed behavior");

return items;

}

}

Step 8: Use a concrete “do this now” checklist

If you want to pilot this approach this week, do this:

- create

docs/TEAM_GUIDANCE.md - add 5 to 10 team rules only

- create

eng/validate-workflow.ps1 - define 3 trigger phrases your team will actually use

- add one reusable prompt template

- test 3 real prompts in Visual Studio Copilot Chat

- review outputs in a pull request

- version changes to guidance like any other engineering asset

That is enough to move from “Copilot knows generic C#” to “Copilot responds in a way that resembles our team.”

Step 9: Test with realistic prompts and failure cases

Do not test with toy prompts like “create a service.” Test with backlog-shaped requests and messy real-world inputs.

Examples:

- “Prepare this PR for review and tell me what I missed.”

- “Add a new endpoint for order creation using our service pattern.”

- “Validate whether these changes meet team conventions before I open the PR.”

- “Refactor this controller logic into the service layer.”

Here is an executable example of a reusable prompt pattern:

<!-- prompt-template.md: a reusable prompt pattern for team-specific Copilot behavior -->

# Skill Prompt Template

You are assisting with a C# repository in Visual Studio.

Apply these team rules:

- Prefer services over controller logic.

- Recommend `dotnet format`, build, and test before PR.

- If endpoints change, remind the developer to update `README.md`.

Return:

1. A short checklist

2. Any suggested commands

3. Minimal code changes aligned to the rules

When the workflow fails, classify the reason:

- stale guidance

- vague rule wording

- missing example

- too-broad scope

- insufficient clarification behavior

Then fix the asset, not just the prompt.

Step 10: Keep human review where it belongs

This final guardrail matters.

Keep explicit human approval for:

- architectural trade-offs

- security-sensitive changes

- infrastructure changes

- production-impacting decisions

- compliance-relevant modifications

- any external system action beyond bounded local workflows

The strongest pattern I see is this: teams get better results when they stop asking Copilot to be an autonomous engineer and start teaching it to be a reliable participant in their existing delivery system.

If you are using GitHub Copilot in Visual Studio today, the next practical move is not another giant prompt doc. It is one governed, repo-backed workflow for one repeatable task.

Start there. Test it hard. Version it. Expand only when it earns trust.

If you had to encode one team workflow this quarter—PR prep, endpoint scaffolding, validation checks, or refactoring rules—which would you start with and why?

#GitHubCopilot #VisualStudio #DeveloperExperience #DevEx

Sources & References

- Building Applications with GitHub Copilot Agent Mode - Training — supports the use of structured documentation and agent-oriented workflows with Copilot.

- Agent flows and workflows overview - Microsoft Copilot Studio — supports the discussion of explicit workflow design, actions, and testing in the broader Microsoft agent ecosystem.

- Microsoft 365 Copilot hub — supports the claim that Microsoft frames enterprise Copilot extensibility around governed customization.

- Microsoft 365 developer documentation - Microsoft 365 Developer — supports the discussion of controlled extensions tied to organizational systems and policies.

- Create and publish agents with Microsoft Copilot Studio - Training — supports the lifecycle point around creating, publishing, and managing agent assets.

- Azure developer documentation — supports the broader Microsoft developer tooling context for AI-enabled application workflows.

Try it yourself

Run this tutorial as a Jupyter notebook: Download runbook.ipynb (44 cells, 34 KB).