The enterprise case for data sovereignty in autonomous AI systems

The enterprise case for data sovereignty in autonomous AI systems

Data sovereignty is no longer compliance theater. Autonomous AI turns it into an architectural survival requirement.

Autonomous AI changes the stakes of governance because the system is no longer just reading data—it is deciding, retrieving, and acting across enterprise boundaries. Once agents can take action, sovereignty becomes a control boundary for limiting blast radius.

The uncomfortable truth: autonomous AI turns governance gaps into operational risk

The industry still rewards the wrong things first. Teams obsess over model quality, response latency, and how fast they can get a demo into an executive meeting, while treating sovereignty as something procurement will “sort out” with contract language.

That mindset fails the moment an agent can do more than answer a question.

A passive chatbot with weak controls can expose information. An autonomous agent with weak controls can retrieve from multiple systems, chain tool calls, trigger workflows, open tickets, modify records, and persist decisions without a human in the loop. That is a different risk profile.

This is why I take a hard position: enterprise leaders should treat data sovereignty as a design requirement for autonomous AI systems, not a legal afterthought.

In Q4, a 14-person internal platform team I advised had six copilots connected across Microsoft 365, ServiceNow, and a finance knowledge base before anyone could answer a basic incident question: which identity each agent used when it wrote back to a ticketing system.

That is the real sovereignty problem. The practical questions are:

- Where is the data processed?

- Which service boundary does it cross?

- Which identity is actually being used?

- Which logs exist?

- How fast can access be revoked or reconfigured?

If your architecture cannot answer those questions quickly, you do not have autonomous AI under control. You have distributed delegation with unclear accountability.

Why sovereignty becomes a design requirement once agents can act

Once agents can act, sovereignty becomes inseparable from action scope.

You can see this clearly in enterprise platforms like Microsoft 365 Copilot, Power Platform, and Intune: agents increasingly operate across sensitive operational data, workflows, connectors, and enterprise APIs. The same control-boundary logic applies well beyond Microsoft—to any cloud or SaaS ecosystem where agents can retrieve broadly and act with delegated authority.

The conventional argument says: “We’ll start small and tighten controls later.”

That is backwards.

The more connectors, plugins, workflow permissions, and line-of-business integrations an agent has, the more important it is to know exactly where data flows and under what controls. Sovereignty is what keeps autonomy from becoming uncontrolled delegation.

A simple way to explain this to technical and non-technical stakeholders is to separate retrieval permissions from action permissions. The pattern below is illustrative pseudocode, not production-ready policy code.

# Policy model separating retrieval permissions from action permissions.

from dataclasses import dataclass, field

from typing import Dict, Set

@dataclass

class SovereigntyPolicy:

retrieval: Dict[str, Set[str]] = field(default_factory=dict)

actions: Dict[str, Set[str]] = field(default_factory=dict)

def can_retrieve(self, actor: str, source: str) -> bool:

return source in self.retrieval.get(actor, set())

def can_act(self, actor: str, tool: str) -> bool:

return tool in self.actions.get(actor, set())

policy = SovereigntyPolicy(

retrieval={"finance-agent": {"eu-sales-lake", "policy-wiki"}},

actions={"finance-agent": {"ticketing-api"}}

)

print(policy.can_retrieve("finance-agent", "eu-sales-lake"))

What to observe: access to a data source does not automatically grant the right to trigger a tool. That separation is the beginning of sovereign design.

Hosted AI dependence is rising, and that raises the bar for control boundaries

Enterprises are not going to self-host every AI workload. They should not. Hosted AI services accelerate deployment and reduce operational burden.

But hosted dependence raises the bar for clarity.

You need to know:

- the service boundary,

- the data residency model,

- the retention behavior,

- the audit surface,

- the portability options,

- and the fallback plan if that hosted dependency becomes unacceptable.

This is why service-boundary clarity matters. Microsoft 365 Copilot Chat, for example, documents where prompts and responses are processed and what enterprise protections apply. Whether you use that product or not, that is the standard leaders should demand from every hosted AI dependency.

Sovereignty does not mean pretending every workload must be fully self-hosted. It means understanding and shaping dependency boundaries so the organization remains in control of risk, evidence, and operational options.

There is a fair counterpoint here: excessive sovereignty controls can slow delivery if applied indiscriminately. I agree. The answer is not blanket friction—it is risk-tiered control. Low-risk retrieval use cases should move faster than agents that can write to finance, HR, identity, or production systems.

The four control planes that actually matter

If you want a practical operating model, implement sovereignty across four control planes.

1) Identity

Every agent should operate with least privilege, explicit delegation, and revocable credentials. Avoid broad inherited access through convenience integrations.

If an agent uses Microsoft Graph, line-of-business APIs, or Power Platform connectors, the exact app identity, delegated scope, and consent path matter. “It runs under the user” is not a sufficient answer.

For a quick review motion, inventory the application and service principal surface tied to your Azure-connected agent apps. This is a first-pass inventory report that requires tenant-specific validation and enrichment before formal review.

# Inventory Azure-connected agent apps and export principal metadata for review.

$apps = Get-AzADApplication | Select-Object DisplayName, AppId, Id

$spns = Get-AzADServicePrincipal | Select-Object DisplayName, AppId, Id

$joined = foreach ($app in $apps) {

$sp = $spns | Where-Object { $_.AppId -eq $app.AppId } | Select-Object -First 1

[pscustomobject]@{

DisplayName = $app.DisplayName

AppId = $app.AppId

ApplicationObject = $app.Id

ServicePrincipal = $sp.Id

}

}

$joined | Export-Csv -Path ".\azure-agent-principals.csv" -NoTypeInformation

$joined

What to do next: map each app to its owner, and flag anything without a named business and security owner. Unowned principals are sovereignty debt.

2) Configuration control

Prompts, connectors, tool permissions, environment settings, grounding sources, and policy objects are governed configuration. They are not harmless builder choices.

Copilot Studio governance guidance is useful here because it explicitly ties configuration to residency, DLP, compliance, and environment controls. That is the signal: configuration is part of governance.

3) Data governance

This is where platforms like Microsoft Purview become relevant. Classification, lineage, policy enforcement, and auditability are not optional if agents can traverse from low-risk content into regulated or high-impact systems.

4) Runtime defense-in-depth

Security guidance across enterprise AI increasingly points to the same model: identity, governance, runtime enforcement, and detection all matter. No single perimeter will save you once agents orchestrate across systems.

A lightweight middleware layer can enforce sovereignty checks before retrieval or actions are executed. This is a minimal pattern, not production-ready policy code.

# Middleware enforcing sovereignty checks before retrieval or action execution.

from dataclasses import dataclass

@dataclass

class Request:

actor: str

kind: str

target: str

region: str

class Middleware:

def __init__(self, retrieval_allowed, action_allowed):

self.retrieval_allowed = retrieval_allowed

self.action_allowed = action_allowed

def authorize(self, req: Request) -> bool:

if req.kind == "retrieve":

return self.retrieval_allowed(req.actor, req.target) and req.region == "EU"

return self.action_allowed(req.actor, req.target) and req.region == "EU"

mw = Middleware(lambda a, t: t == "eu-sales-lake", lambda a, t: t == "ticketing-api")

print(mw.authorize(Request("finance-agent", "retrieve", "eu-sales-lake", "EU")))

What to observe: the authorization decision happens before execution, and region is part of the decision rather than an ignored field.

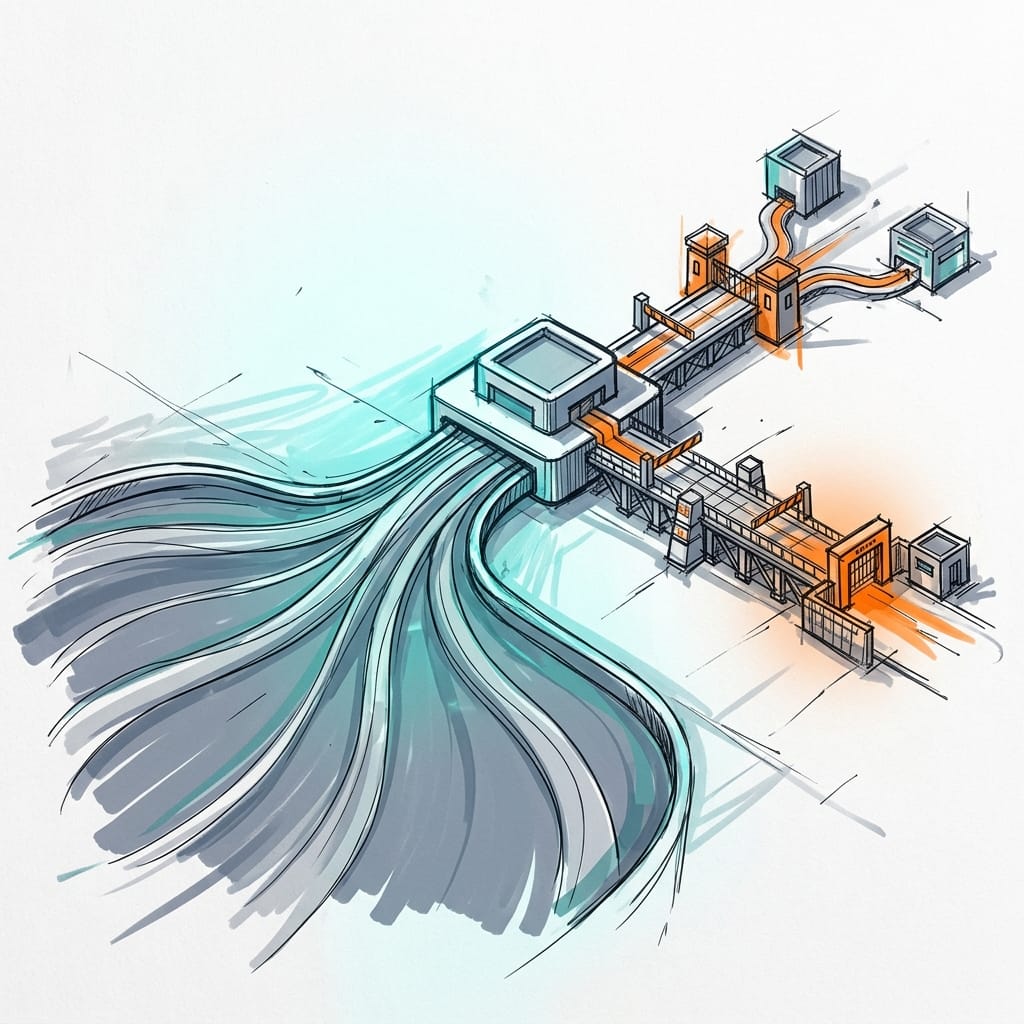

A practical architecture stance: governed data plane, constrained action plane

Here is the architecture stance I recommend:

Let agents reason over a governed data plane first. Then require narrower, policy-checked paths for actions.

That means:

- retrieval from approved and classified sources,

- explicit region and connector policy,

- separate authorization for write operations,

- and audit logs for every attempted invocation.

Whether you use Microsoft Fabric, another cloud data platform, or a mixed SaaS estate, the underlying lesson is the same: agentic systems need a coherent data plane and shared business semantics, not just a model endpoint.

The simplest way to explain the flow is:

- the user interacts with an autonomous agent,

- the agent calls policy middleware before doing anything,

- the middleware evaluates whether the request is retrieval or action,

- retrieval is limited to approved data sources,

- actions are limited to approved enterprise tools,

- and both paths generate audit evidence for governance review.

If you are using Copilot Studio or Power Platform, this also means environment isolation and scoped connectors. Experimentation should not inherit production authority by default. Development, test, and production environments should have different connector availability, DLP policies, and approval paths.

Auditability is the real executive test of sovereignty

Boards and audit committees do not care whether your architecture diagram looks elegant. They care whether you can reconstruct what happened.

The executive test is brutally simple:

- What did the agent see?

- Why did it act?

- Which identity did it use?

- Which systems did it touch?

- Which policy allowed or denied the step?

If your AI platform team cannot answer those questions in an incident review, the system is not mature enough for broad autonomy.

This is where sovereignty becomes evidence, not branding. Useful platform capabilities exist across lineage, service-boundary documentation, and security controls—but they only matter if you operationalize them with logs, policy traces, and environment-level accountability.

A minimal audit pattern for tool invocations looks like this. It is illustrative pseudocode, not production-ready policy code.

# Audit logger for every tool invocation with region and decision metadata.

from datetime import datetime, timezone

import json

def audit_event(actor: str, operation: str, target: str, allowed: bool, region: str) -> str:

event = {

"ts": datetime.now(timezone.utc).isoformat(),

"actor": actor,

"operation": operation,

"target": target,

"allowed": allowed,

"region": region,

}

line = json.dumps(event, separators=(",", ":"))

print(line)

return line

audit_event("finance-agent", "retrieve", "eu-sales-lake", True, "EU")

audit_event("finance-agent", "act", "crm-write-api", False, "US")

What to do next: make sure your real implementation records actor, operation, target, allow/deny result, timestamp, and region or environment context. Ownership, logging, and policy traceability should come together in one reviewable control story.

Reliability patterns matter because agent failures compound downstream

This is the part many governance discussions miss: sovereignty is also about reliability.

When agents orchestrate across systems, failures compound. Retries can duplicate actions. Stale context can drive wrong decisions. Partial writes can leave systems inconsistent. Cascading failures can spread bad outcomes faster than any human workflow would.

For high-impact actions, I recommend five reliability rules:

- Approval gates for high-risk actions

Not every action should be autonomous. Writes to HR, finance, identity, or production infrastructure should require policy-based approval or human confirmation.

- Idempotent operations

If a retry happens, the downstream system should not create duplicate tickets, duplicate account changes, or duplicate financial entries.

- Bounded retries

Retries without limits turn transient faults into systemic incidents.

- Circuit breakers

If a dependency starts failing or returning anomalous results, stop the action path rather than letting the agent continue to amplify damage.

- Safe degradation modes

The fallback should be “retrieve and recommend” rather than “guess and act.”

A small rule engine for region pinning and connector allow-lists makes this concrete. This is illustrative pseudocode, not production-ready policy code.

# Minimal sovereignty rule engine for region pinning and connector allow-list checks.

def evaluate(request_region: str, data_region: str, connector: str) -> dict:

allowed_connectors = {"sharepoint-eu", "ticketing-api"}

same_region = request_region == data_region

connector_ok = connector in allowed_connectors

return {

"same_region": same_region,

"connector_ok": connector_ok,

"allowed": same_region and connector_ok,

}

result = evaluate("EU", "EU", "sharepoint-eu")

print(result)

What to observe: even a minimal policy can enforce same-region handling and connector allow-listing. Real systems need more nuance, but these two checks alone prevent a surprising amount of accidental boundary drift.

What leaders should do in the next two quarters

If you are an enterprise leader, here is the practical playbook.

In the next 30 days

- Inventory every agent, copilot, connector, and automation path that can access enterprise data or trigger actions.

- Export app identities, service principals, and enterprise permission grants.

- Review Power Platform environments and connectors for uncontrolled reach.

For Microsoft 365 enterprise app permissions that may affect agent boundaries, start with a report like this. This is a first-pass inventory report that requires tenant-specific validation and enrichment before formal review.

# Export Microsoft 365 enterprise app permissions that may affect agent sovereignty boundaries.

$servicePrincipals = Get-MgServicePrincipal -All

$grants = Get-MgOauth2PermissionGrant -All

$report = foreach ($sp in $servicePrincipals) {

$spGrants = $grants | Where-Object { $_.ClientId -eq $sp.Id }

foreach ($grant in $spGrants) {

[pscustomobject]@{

AppDisplayName = $sp.DisplayName

ClientId = $grant.ClientId

ResourceId = $grant.ResourceId

ConsentType = $grant.ConsentType

Scope = $grant.Scope

}

}

}

$report | Export-Csv ".\m365-agent-permissions.csv" -NoTypeInformation

$report

What to do next: sort by broad scopes and tenant-wide consent. Anything with wide access or unclear ownership deserves immediate review.

For Power Platform governance, export environments and connectors so you can identify where experimentation may already have production adjacency. This is also a first-pass inventory report that requires tenant-specific validation and enrichment.

# Export Power Platform environments and connectors for a sovereignty review.

$environments = Get-AdminPowerAppEnvironment

$connectors = Get-AdminPowerAppConnector

$report = foreach ($env in $environments) {

$envConnectors = $connectors | Where-Object { $_.EnvironmentName -eq $env.EnvironmentName }

foreach ($connector in $envConnectors) {

[pscustomobject]@{

EnvironmentName = $env.DisplayName

EnvironmentId = $env.EnvironmentName

Location = $env.Location

ConnectorName = $connector.ConnectorName

ConnectorId = $connector.ConnectorId

Tier = $connector.Tier

}

}

}

$report | Export-Csv ".\powerplatform-connectors.csv" -NoTypeInformation

$report

What to observe: location, environment segmentation, and connector spread tell you where sovereignty boundaries are weak before an incident tells you the hard way.

In the next 60–90 days

- Classify agent use cases by action authority, data sensitivity, and dependency on hosted service boundaries.

- Standardize a control baseline across identity, environment segmentation, DLP, logging, approval workflows, and incident response.

- Separate governed retrieval paths from constrained action paths.

- Require a documented sovereignty map for every production agent:

- data boundary, - identity boundary, - action boundary, - audit boundary.

That is the maturity threshold. No sovereignty map, no production autonomy.

Conclusion: sovereignty is how enterprises earn the right to automate

The future does not belong to the most permissive AI stack. It does not belong to the most restrictive one either. It belongs to the enterprise that can automate confidently because it knows exactly where control begins and ends.

That is why I am opinionated on this: data sovereignty is not anti-AI. It is the discipline that makes autonomous AI survivable at scale.

Build agents on governed data planes. Enforce identity-aware access. Constrain action paths. Apply risk-tiered controls so high-impact autonomy gets the strongest safeguards without slowing every low-risk use case. If your agents can act across systems, your architecture must prove that they can also be constrained, observed, and stopped.

Rate your team’s sovereign-AI posture from 1 to 5: which boundary is weakest today—data, identity, action, or audit?

#EnterpriseAI #DataGovernance #AIAgents

Sources & References

- Microsoft Fabric documentation - Microsoft Fabric

- Azure Architecture Center - Azure Architecture Center

- Microsoft Intune documentation - Microsoft Intune

- Official Microsoft Power Platform documentation - Power Platform

- Microsoft Purview

- Security and governance - Microsoft Copilot Studio

- Security hub - Security

- Agents for Microsoft 365 Copilot

- Microsoft 365 Copilot Chat Privacy and Protections

- Choose between Agent Builder in Microsoft 365 Copilot and Copilot Studio to build your agent

Try it yourself

Run this tutorial as a Jupyter notebook: Download runbook.ipynb (26 cells, 24 KB).